The graphics card makes or breaks the visual quality of gaming. However, the release of new and exciting processor technology may lead some to wonder how exactly games, processors (CPUs) and video cards (GPUs) tie together. For example, what does a CPU do for a game and how, in turn, does the game leverage the processor?

The basic purposes

Let’s start with the basics. Obviously, calculating the game’s logic is an essential component of what a CPU does. The unit may be charged with collision detection between characters and objects, saving, loading the game, keeping inventory and health points, to name a few things. A modern CPU will shrug off such calculations, but there are a lot of them and they are essential. These tasks are light but important and are executed only with the help of the CPU and the PC’s memory (RAM). Depending on the game’s genre, some of these things can be multi-threaded (made to use more cores effectively, boosting performance).

Multi-threading challenges

Something far more demanding and much harder to program in a multi-threaded fashion is a game’s Artificial Intelligence (AI). While how demanding it can be depends entirely on the genre of the given title, grand strategy games often have a few very complex AI opponents and allies duking it out and as such can stress the processor quite hard.

Apart from that there are also the physics of the game, which are likewise very demanding, but thankfully can be threaded far more easily than multi-threaded AI. Things such as armor penetration, ballistics simulations, breakable environments, and any number of moving props can absolutely hammer performance.

A processor is also in charge of input and audio processing. How impactful audio is is really down to the game and its audio API/technology. Today’s CPUs generally have no problem handling input.

The call of the draw

While everything I’ve mentioned to this point will tax a CPU, the single hardest thing the processor does in most modern games is draw calls. That is, it tells the GPU what to draw on screen and how to draw it. For all its power and performance, the GPU is not capable of accessing information directly from storage and it has not been designed to handle certain tasks. With the processor as its boss, though, giving it the correct leadership and orders, and making sure it accomplishes its goals, miracles can happen. Unfortunately, the games, drivers, and APIs sometimes make this cooperation less than ideal, but thankfully even that can be fixed in the software. All of this information feeds into my next point – every game is different.

Optimization done well… and not so well

For example, ARMA 3 is, in its current state, a mostly single-threaded game. It uses one processor’s core to the maximum extent. Now, it can still derive some power from a multi-core CPU (as it can unload SOME tasks), but the Operating System and background tasks also eat up precious resources, so the bottleneck is clear. There are a number of other reasons the ARMA 3 is so tough on a CPU. Foremost among them is its draw call number. Not all CPUs are equally good in calling draw calls, at least not in a single-threaded scenario.

Metro: Last Light Redux is an excellent example of a well-threaded game. Beautiful and optimized, it uses as many threads as the user can throw at it. It’s PhysX physics engine is multi-threaded too, allowing people with powerful CPUs to maintain good framerates no matter the chaos on screen. Of course, an open-world game with Metro’s engine would be MUCH heavier, but it is still a great example of how well DirectX 11 can really leverage a CPU and GPU if the developers are experienced. While it is very draw-call heavy, the game doesn’t clog a single core/thread with these demands.

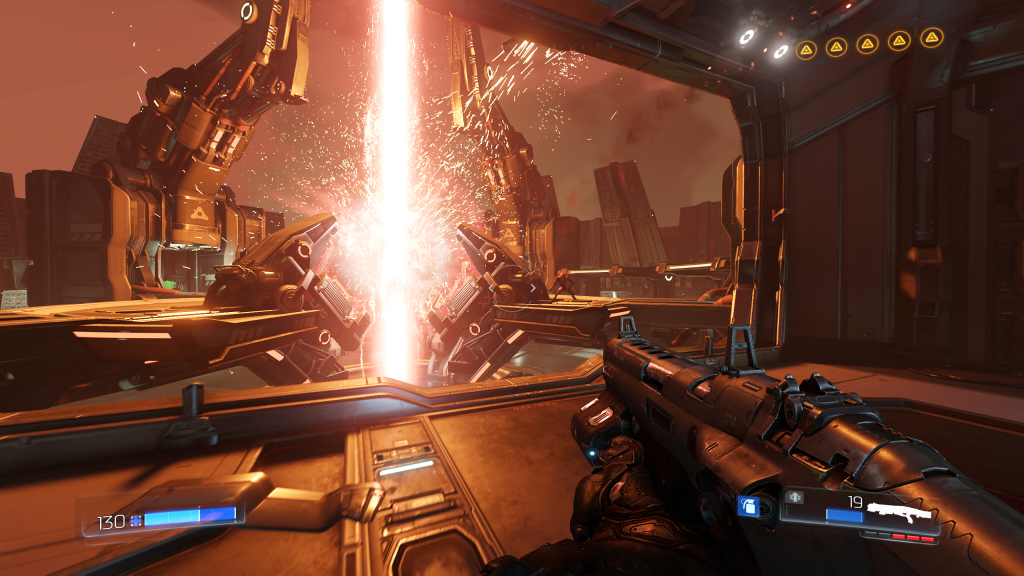

Finally, we can talk about the new APIs again. Running on the id Tech 6 engine, DOOM doesn’t gobble up CPU resources, even with its (surprisingly) good AI and physics. This is partly thanks to how well it can leverage CPU resources and cores. Sure, there are some kinks to work out with how well it handles the new Ryzen architecture, but id has pledged that future engine revisions will be better. Even without them, the current situation is usually silky smooth.

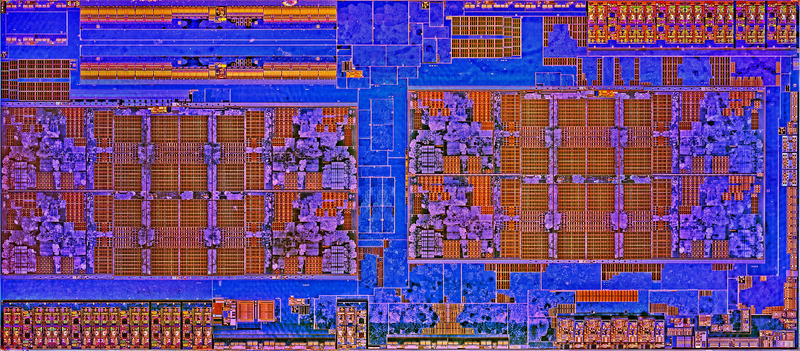

When it comes to older games and emulators, things become both simpler and more complicated. Yes, today’s CPUs are faster, but certain architectures will see gains in different areas. For example, AMD’s new Ryzen architecture may be a better choice for certain old game emulators thanks to its instruction decoder being able to handle more complex instructions per cycle. On the other hand, a game like Fallout 3 tends to prefer Kaby Lake’s mostly better general instructions per clock and pure clock speeds. Most of the time, though, emulators and old games tend to favor single and dual core performance as well as IPC. However, exceptions exist and virtually all modern CPUs will provide a smooth experience, far better than what was possible when those old games were released.

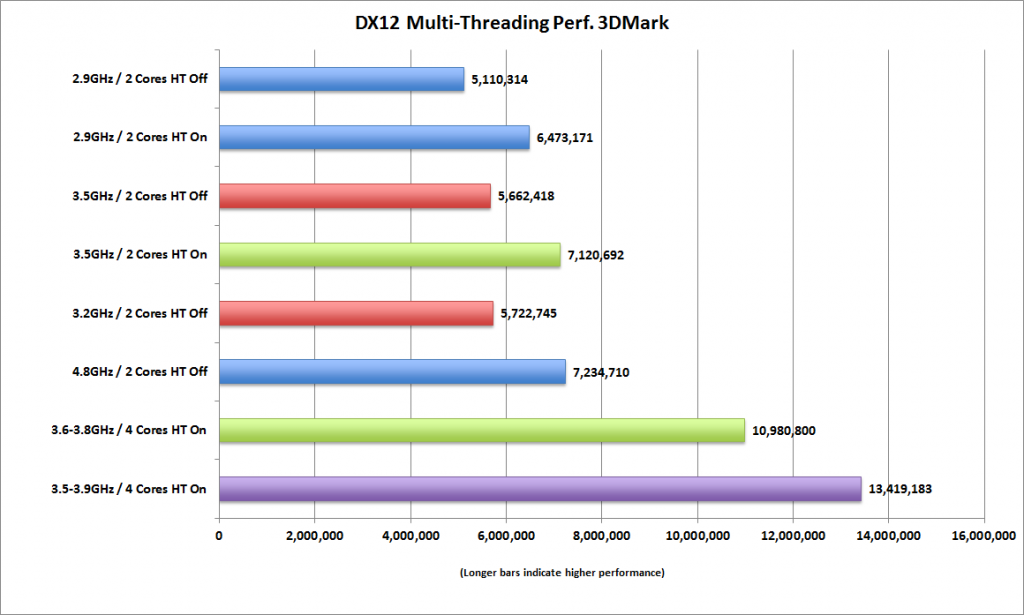

The multi-core future

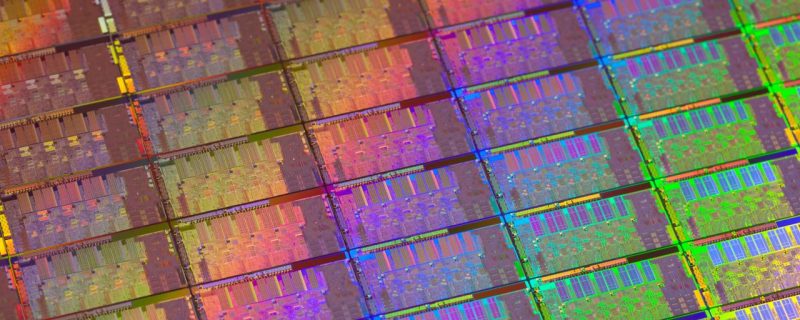

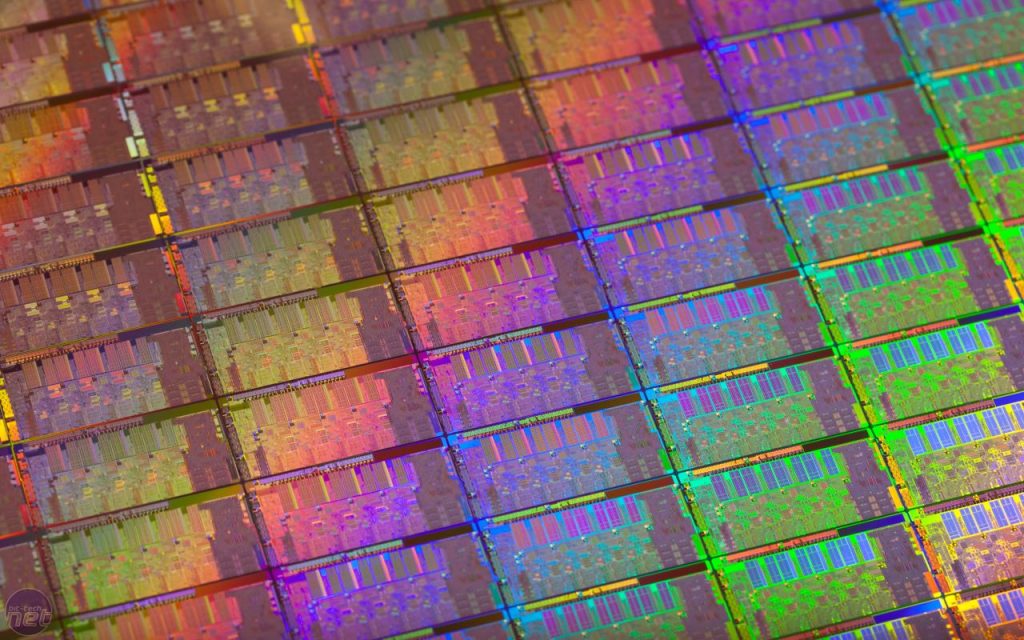

So what are the general trends in processors and video games right now? For sure, more cores are slowly but surely becoming the norm. RAM speeds and latency can have a major impact for both Intel and AMD CPUs. Instructions per clock improvements can still be squeezed out, but doing so will become harder and harder. Apart from that, process maturity in all major foundries and chip density will dictate the actual clock speeds.

As you can see, every game has its own quirks that can stress out different parts of a central processor. Both AMD and Intel CPUs have upsides and drawbacks that manifest differently in games (and in other programs too). The CPU race is on again, this time with faster and more mature nodes, higher clocks and more refined architectures being the issues of the day. We’re lucky that any decent and modern combination is adequate for a very good experience in pretty much all use cases today.